T-6: Difference between revisions

| (52 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

<strong>Goal: </strong> | <strong>Goal: </strong> | ||

Complete the characterisation of the energy landscape of the spherical <math>p</math>-spin. | |||

<br> | <br> | ||

<strong>Techniques: </strong> | <strong>Techniques: </strong> saddle point, random matrix theory. | ||

<br> | <br> | ||

== Problems == | |||

< | === Problem 6: the Hessian at the stationary points, and random matrix theory === | ||

</math> </ | This is a continuation of problem 5. To get the complexity of the spherical <math>p</math>-spin, it remains to compute the expectation value of the determinant of the Hessian matrix: this is the goal of this problem. We will do this exploiting results from random matrix theory discussion in the <code>Tutorial and Exercise 4 </code>. | ||

<ol> | |||

<li> <em> Gaussian Random matrices. </em> Show that the matrix <math> M </math>, defined in Problem 5, is a GOE matrix, i.e. a matrix taken from the Gaussian Orthogonal Ensemble, meaning that it is a symmetric matrix with distribution <math> P_N(M)= Z_N^{-1}\text{exp}(-\frac{N}{4 \sigma^2} \text{Tr} M^2) </math> | |||

where <math> Z_N </math> is a normalization. What is the value of <math> \sigma^2 </math>? | |||

</li> | </li> | ||

< | </ol> | ||

<li> | <ol start="2"> | ||

<li><em> Eigenvalue density and concentration. </em> Let <math> \lambda_\alpha </math> be the eigenvalues of the matrix <math> M </math>. Show that the following identity holds: | |||

<math display="block"> | |||

\mathbb{E}[|\text{det} \left(M - p \epsilon \mathbb{I} \right)|]= \mathbb{E}\left[\text{exp} \left((N-1) \int d \lambda \, \rho_{N-1}(\lambda) \, \log |\lambda - p \epsilon|\right) \right], \quad \quad \rho_{N-1}(\lambda)= \frac{1}{N-1} \sum_{\alpha=1}^{N-1} \delta (\lambda- \lambda_\alpha) | |||

</math> | |||

where <math>\rho_{N-1}(\lambda)</math> is the empirical eigenvalue distribution. It can be shown that if <math> M </math> is a GOE matrix, the distribution of the empirical distribution has a large deviation form with speed <math> N^2 </math>, meaning that <math> P_N[\rho] = e^{-N^2 \, g[\rho]} </math> where now <math> g[\cdot] </math> is a functional. Using a saddle point argument, show that this implies | |||

<math display="block"> | |||

\mathbb{E}\left[\text{exp} \left((N-1) \int d \lambda \, \rho_{N-1}(\lambda) \, \log |\lambda - p \epsilon|\right) \right]=\text{exp} \left[N \int d \lambda \, \rho_\infty(\lambda+p \epsilon) \, \log |\lambda|+ o(N) \right] | |||

</math> | |||

where <math> \rho_\infty(\lambda) </math> is the typical value of the eigenvalue density, which satisfies <math> g[\rho_\infty]=0 </math>. | |||

</li> | </li> | ||

< | </ol> | ||

<ol start="3"> | |||

<li> | <li><em> The semicircle and the complexity.</em> The eigenvalue density of GOE matrices is self-averaging, and it equals to | ||

<math display="block"> | |||

\lim_{N \to \infty}\rho_N (\lambda)=\lim_{N \to \infty} \mathbb{E}[\rho_N(\lambda)]= \rho_\infty(\lambda)= \frac{1}{2 \pi \sigma^2}\sqrt{4 \sigma^2-\lambda^2 } | |||

</math> | </math> | ||

< | <ul> | ||

<!--<li>Check this numerically: generate matrices for various values of <math> N </math>, plot their empirical eigenvalue density and compare with the asymptotic curve. Is the convergence faster in the bulk, or in the edges of the eigenvalue density, where it vanishes? </li>--> | |||

Combining all the results, show that the annealed complexity is | |||

<math display="block"> | |||

\Sigma_{\text{a}}(\epsilon)= \frac{1}{2}\log [4 e (p-1)]- \frac{\epsilon^2}{2}+ I_p(\epsilon), \quad \quad I_p(\epsilon)= \frac{2}{\pi}\int d x \sqrt{1-\left(x- \frac{\epsilon}{ \epsilon_{\text{th}}}\right)^2}\, \log |x| , \quad \quad \epsilon_{\text{th}}= -2\sqrt{\frac{p-1}{p}}. | |||

</math> | |||

The integral <math> I_p(\epsilon)</math> can be computed explicitly, and one finds: | |||

<math display="block"> | |||

I_p(\epsilon)= | |||

\begin{cases} | |||

&\frac{\epsilon^2}{\epsilon_{\text{th}}^2}-\frac{1}{2} - \frac{\epsilon}{\epsilon_{\text{th}}}\sqrt{\frac{\epsilon^2}{\epsilon_{\text{th}}^2}-1}+ \log \left( \frac{\epsilon}{\epsilon_{\text{th}}}+ \sqrt{\frac{\epsilon^2}{\epsilon_{\text{th}}^2}-1} \right)- \log 2 \quad \text{if} \quad \epsilon \leq \epsilon_{\text{th}}\\ | |||

&\frac{\epsilon^2}{\epsilon_{\text{th}}^2}-\frac{1}{2}-\log 2 \quad \text{if} \quad \epsilon > \epsilon_{\text{th}} | |||

\end{cases} | |||

</math> | |||

Plot the annealed complexity, and determine numerically where it vanishes: why is this a lower bound or the ground state energy density? | |||

</ul> | |||

</ol> | |||

<ol start="4"> | |||

<li> | <li><em> The threshold and the stability.</em> | ||

Sketch <math> \rho_\infty(\lambda+p \epsilon) </math> for different values of <math> \epsilon </math>; recalling that the Hessian encodes for the stability of the stationary points, show that there is a transition in the stability of the stationary points at the critical value of the energy density | |||

<math> | |||

\epsilon_{\text{th}}= -2\sqrt{(p-1)/p}. | |||

</math> | |||

When are the critical points stable local minima? When are they saddles? Why the stationary points at <math> \epsilon= \epsilon_{\text{th}}</math> are called <em> marginally stable </em>? | |||

</li> | </li> | ||

</ol> | |||

<br> | <br> | ||

== | == Back to dynamics: quenches, and dynamical transitions == | ||

Through Problems 5 and 6, we have shown that the energy landscape of the spherical <math>p</math>-spin model has exponentially many stationary points , and that there is a transition at the energy density <math>\epsilon_{\rm th}</math>: for <math>\epsilon>\epsilon_{\rm th}</math> the stationary points are saddles, for <math>\epsilon\leq \epsilon_{\rm th}</math> they are local minima. Let us try to deduce something on the systems's dynamics out of this. | |||

<li> '''Gradient descent dynamics.''' The local minima are dynamically stable: if we do gradient descent, we get stuck in a local minimum and we exert a small perturbation to the configuration, gradient descent brings us back to the local minimum. These configurations are <em>trapping</em>. If we try to optimize the landscape, i.e. to reach the ground state, with gradient descent dynamics, we expect that we will not be able to reach it easily, as we will be trapped by local minima. In fact, for the spherical <math>p</math>-spin model it can be shown that starting from random initial conditions and evolving the configuration with gradient descent (possibly with infinitesimal noise, to be sent to zero with a protocol), | |||

<math display="block"> | |||

\lim_{t \to \infty} \lim_{N \to \infty} \frac{ E(\vec{\sigma}(t))}{N} = \epsilon_{\rm th} \neq \epsilon_{\rm gs}. | |||

</math> | |||

The system gets stuck at the energy density level where local minima start to appear, and does not reach the deeper local minima. | |||

</li> | |||

<br> | |||

<li> '''Quenches in temperature and equilibration.''' We can generalize this protocol to higher <math>T</math>: we extract randomly the initial condition of the dynamics, and then we evolve the configuration with Langevin dynamics (gradient descent + noise): | |||

<li> < | <math display="block"> | ||

</li> <br> | \frac{d \vec{\sigma}(t)}{dt}=- {\nabla}_\perp E(\vec{\sigma})+ {\vec{\eta}}_\perp(t), \quad \quad \langle \eta_i(t) \eta_j(t')\rangle= 2 T \delta_{ij} \delta(t-t') | ||

</math> | |||

In Langevin dynamics, <math>{\vec{\eta}}_\perp(t)</math> a Gaussian vector at each time <math> t </math>, uncorrelated from the vectors at other times <math> t' \neq t </math>, with zero average and constant variance proportional to temperature. It represents the action of a thermal bath on the system. | |||

This dynamical protocol is called a <ins>quench </ins>. The question we can ask is: does the system equilibrate with the bath under this dynamics? If yes, we should see that | |||

<math display="block"> | |||

\lim_{t \to \infty} \lim_{N \to \infty} \frac{E(\vec{\sigma}(t))}{N} = \epsilon_{\rm eq}(T), | |||

</math> | |||

where <math>\epsilon_{\rm eq}(T)</math> is the equilibrium energy density at the temperature <math> T </math>, the same one controlling the strength of the noise. Equilibrating with the bath would indeed imply that at large time the system visits uniformly the equilibrium energy shell. | |||

</li> | |||

<br> | |||

<li> < | <li> '''Dynamical transition.''' Now, in the spherical <math>p</math>-spin we know that if <math>\epsilon_{\rm eq}(T)>\epsilon_{\rm th}</math>, the energy shell has many stationary points, but they are all unstable saddles and do not trap the dynamics. We expect that this energy shell is relatively easy to explore dynamically, and that equilibration takes place. On the other hand, if <math>\epsilon_{\rm eq}(T)<\epsilon_{\rm th}</math>, in the equilibrium energy shell and at higher energy, there are exponentially many local minima that trap the dynamics, and we expect that reaching equilibrium configurations will be difficult. This tells us that there exists a critical <math>T_d</math>, defined by | ||

< | <math display="block"> | ||

<math> | \epsilon_{\rm eq}(T_d)=\epsilon_{\rm th}, | ||

</math> | </math> | ||

</ | such that for <math>T<T_d</math> | ||

<math display="block"> | |||

\lim_{t \to \infty} \lim_{N \to \infty} \frac{E(\vec{\sigma}(t))}{N} \neq \epsilon_{\rm eq}(T). | |||

\ | |||

</math> | </math> | ||

The statement above for gradient descent corresponds to the special case <math>T=0</math>. <math>T_d</math> is called the <ins>dynamical transition temperature</ins>. | |||

</li> | |||

< | |||

</ | |||

<br> | <br> | ||

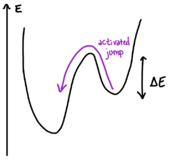

[[File:Activated Jump.png|thumb|right|x160px|Fig. 6 - Activated jump across an energy barrier.]] | |||

<li> '''Equilibration timescales.''' Does it mean that when <math>T<T_d</math>, the system <em>never</em> equilibrates? This is true only in the limit <math>N \to \infty</math>. When <math>N </math> is finite, there is a timescale <math>\tau_{\rm eq}(T, N)</math> beyond which the system equilibrates. However, this equilibration timescale | |||

in the spherical <math>p</math>-spin scales as | |||

<math display="block"> | |||

\tau_{\rm eq}(T< T_d, N) \sim e^{N}. | |||

<math> | |||

</math> | </math> | ||

</ | This is again due to the presence of many local minima/ metastable states, that are separated by <ins>extensive</ins> energy barriers. So, when we take <math>N \to \infty</math> before taking the large time limit, we are unable to see equilibration and we have a sharp transition, which becomes a crossover for finite <math>N</math>. | ||

</li> | |||

<br> | |||

<li> '''Activation and Arrhenius law.''' Why exponential timescales? When the noise in the Langevin dynamics is weak (temperature is small), the dynamics gets stuck in local minima for very large time. This time depends crucially on the <em> energy barrier </em> which separate the minimum from the other configurations (see Fig 6.1). The <ins>Arrhenius law</ins> states that the typical timescale <math> \tau</math> required to escape from a local minimum through a barrier of height <math> \Delta E </math> with thermal dynamics with inverse temperature <math> \beta </math> scales as <math>\tau \sim \tau_0 e^{-\beta \, \Delta E} </math>. Since in the spherical <math>p</math>-spin we have <math> \Delta E \sim N \; \Delta \epsilon </math>, then <math> \tau_{\rm eq}(T< T_d, N)> \tau_0 e^{-\beta \, \Delta E}\sim e^{N} </math>. A dynamics made of jumps from minimum to minimum through the crossing of energy barriers is called <ins> activated dynamics </ins>. | |||

<li> <em> | </li> | ||

</ | |||

<br> | <br> | ||

== Check out: key concepts == | |||

Metastable states, Hessian matrices, random matrix theory, landscape’s complexity. | |||

Latest revision as of 17:27, 15 March 2026

Goal:

Complete the characterisation of the energy landscape of the spherical -spin.

Techniques: saddle point, random matrix theory.

Problems

Problem 6: the Hessian at the stationary points, and random matrix theory

This is a continuation of problem 5. To get the complexity of the spherical -spin, it remains to compute the expectation value of the determinant of the Hessian matrix: this is the goal of this problem. We will do this exploiting results from random matrix theory discussion in the Tutorial and Exercise 4 .

- Gaussian Random matrices. Show that the matrix , defined in Problem 5, is a GOE matrix, i.e. a matrix taken from the Gaussian Orthogonal Ensemble, meaning that it is a symmetric matrix with distribution where is a normalization. What is the value of ?

- Eigenvalue density and concentration. Let be the eigenvalues of the matrix . Show that the following identity holds: where is the empirical eigenvalue distribution. It can be shown that if is a GOE matrix, the distribution of the empirical distribution has a large deviation form with speed , meaning that where now is a functional. Using a saddle point argument, show that this implies where is the typical value of the eigenvalue density, which satisfies .

- The semicircle and the complexity. The eigenvalue density of GOE matrices is self-averaging, and it equals to

-

Combining all the results, show that the annealed complexity is

The integral can be computed explicitly, and one finds:

Plot the annealed complexity, and determine numerically where it vanishes: why is this a lower bound or the ground state energy density?

- The threshold and the stability. Sketch for different values of ; recalling that the Hessian encodes for the stability of the stationary points, show that there is a transition in the stability of the stationary points at the critical value of the energy density When are the critical points stable local minima? When are they saddles? Why the stationary points at are called marginally stable ?

Back to dynamics: quenches, and dynamical transitions

Through Problems 5 and 6, we have shown that the energy landscape of the spherical -spin model has exponentially many stationary points , and that there is a transition at the energy density : for the stationary points are saddles, for they are local minima. Let us try to deduce something on the systems's dynamics out of this.

Check out: key concepts

Metastable states, Hessian matrices, random matrix theory, landscape’s complexity.