L-3: Difference between revisions

No edit summary |

|||

| (34 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

<strong>Goal:</strong> This lecture is dedicated to a classical model in disordered systems: the directed polymer in random media. It has been introduced to model vortices in superconductors or domain walls in magnetic films. We will focus on algorithms that identify the ground state or compute the free energy at temperature <math>T</math>, as well as on the Cole–Hopf transformation that maps this model to the KPZ equation. | |||

= Directed Polymers (''d = 1'') = | |||

The configuration is described by a vector function <math>\vec{x}(t)</math>, where <math>t</math> is the internal coordinate. The polymer lives in <math>D = 1 + N</math> dimensions. | |||

Examples: vortex lines, DNA strands, fronts. | |||

Although polymers may form loops, we restrict to directed polymers, i.e., configurations without overhangs or backward turns. | |||

=Directed | = Directed Polymers on a lattice = | ||

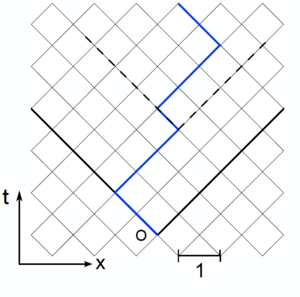

[[File:SketchDPRM.png|thumb|left|Sketch of the discrete Directed Polymer model. At each time the polymer grows either one step left or one step right. A random energy <math>V(\tau,x)</math> is associated to each node and the total energy is simply <math>E[x(\tau)] = \sum_{\tau=0}^t V(\tau,x)</math>.]] | |||

We introduce a lattice model for the directed polymer (see figure). In a companion notebook we provide the implementation of the powerful Dijkstra algorithm. Dijkstra allows one to identify the minimal energy among the exponential number of configurations <math>x(\tau)</math>: | |||

<math display="block"> | |||

E_{\min} = \min_{x(\tau)} E[x(\tau)]. | |||

</math> | |||

We are also interested in the ground state configuration <math>x_{\min}(\tau)</math>. For both quantities we expect scale invariance with two exponents <math>\theta</math>, <math>\zeta</math> for the energy and for the roughness: | |||

<math display="block"> | |||

E_{\min} = c_\infty t + \kappa_1 t^{\theta}\chi, | |||

\quad | |||

x_{\min}(t/2) \sim \kappa_2 t^{\zeta}\tilde\chi. | |||

</math> | |||

<strong>Universal exponents:</strong> Both <math>\theta</math> and <math>\zeta</math> are independent of the lattice, the disorder distribution, the elastic constants, or the boundary conditions. | |||

<strong>Non-universal constants:</strong> <math>c_\infty</math>, <math>\kappa_1</math>, <math>\kappa_2</math> are of order 1 and depend on the lattice, the disorder distribution, the elastic constants, etc. However <math>c_\infty</math> is independent of the boundary conditions. | |||

<strong>Universal distributions:</strong> <math>\chi</math>, <math>\tilde\chi</math> are universal, but depend on the boundary conditions. Starting from 2000, a remarkable connection has been revealed between this model and the smallest eigenvalues of random matrices. In particular, we discuss two different boundary conditions: | |||

* <strong>Droplet</strong>: <math>x(\tau=0) = x(\tau=t) = 0</math>. In this case, up to rescaling, <math>\chi</math> is distributed as the smallest eigenvalue of a GUE random matrix (Tracy–Widom distribution <math>F_2(\chi)</math>). | |||

</math></ | |||

* <strong>Flat</strong>: <math>x(\tau=0) = 0</math> while the other end <math>x(\tau=t)</math> is free. In this case, up to rescaling, <math>\chi</math> is distributed as the smallest eigenvalue of a GOE random matrix (Tracy–Widom distribution <math>F_1(\chi)</math>). | |||

<math> | |||

</math> | |||

=== Entropy and scaling relation === | |||

< | It is useful to compute the entropy | ||

<math display="block"> | |||

\text{Entropy}= \ln\binom{t}{\frac{t-x}{2}} \approx t \ln 2 -\frac{x^2}{2 t} + O(x^4). | |||

</math> | |||

From which one could guess from dimensional analysis | |||

<math display="block"> | |||

\theta = 2\zeta - 1. | |||

</math> | |||

This relation is actually exact also for the continuum model. | |||

= Directed polymers in the continuum = | |||

We now reanalyze the previous problem in the presence of quenched disorder. Instead of discussing the case of interfaces, we will focus on directed polymers. | |||

Let us consider polymers <math>x(\tau)</math> of length <math>t</math>. The energy associated with a given polymer configuration can be written as | |||

<math display="block"> | |||

E[x(\tau)] = \int_0^t d\tau \left[ \frac{1}{2}\left(\frac{dx}{d\tau}\right)^2 + V(x(\tau),\tau) \right]. | |||

</math> | |||

The first term describes the elastic energy of the polymer, while the second one is the disordered potential, which we assume to be | |||

<math display="block"> | |||

\overline{V(x,\tau)} = 0, | |||

\qquad | |||

\overline{V(x,\tau)V(x',\tau')} = D\,\delta(x-x')\,\delta(\tau-\tau'). | |||

</math> | |||

where <math>D</math> is the disorder strength. | |||

== Polymer partition function and propagator of a quantum particle == | |||

< | |||

<math> | Let us consider polymers starting at <math>0</math>, ending at <math>x</math> and at thermal equilibrium at temperature <math>T</math>. The partition function of the model reads | ||

\ | <math display="block"> | ||

</math></ | Z(x,t) = \int_{x(0)=0}^{x(t)=x} \mathcal{D}x(\tau)\, | ||

\exp\!\left[-\frac{1}{T}\int_0^t d\tau\left(\frac{1}{2}(\partial_\tau x)^2 + V(x(\tau),\tau)\right)\right]. | |||

< | </math> | ||

<math> | Here, the partition function is written as a sum over all possible paths, corresponding to all polymer configurations that start at <math>0</math> and end at <math>x</math>, weighted by the appropriate Boltzmann factor. | ||

\ | |||

</math> | Let's perform the following change of variables: <math>\tau = i t'</math>. We also identify <math>T</math> with <math>\hbar</math> and <math>\tilde t = - i t</math> as the time. | ||

<math display="block"> | |||

Z(x,\tilde t) = \int_{x(0)=0}^{x(\tilde t)=x} \mathcal{D}x(t')\, | |||

\exp\!\left[\frac{i}{\hbar}\int_0^{\tilde t} dt'\left(\frac{1}{2}(\partial_{t'} x)^2 - V(x(t'),t')\right)\right]. | |||

</math> | |||

= | Note that <math>S[x] = \int_0^{\tilde t} dt'\left(\frac{1}{2}(\partial_{t'} x)^2 - V(x(t'),t')\right)</math> is the classical action of a particle with kinetic energy <math>\frac{1}{2}(\partial_{t'}x)^2</math> and time-dependent potential <math>V(x(t'),t')</math>, evolving from time zero to time <math>\tilde t</math>. From the Feynman path integral formulation, <math>Z(x,\tilde t)</math> is the propagator of the quantum particle. | ||

=== Feynman–Kac formula === | |||

Let's derive the Feynman–Kac formula for <math>Z(x,t)</math> in the general case: | |||

* First, focus on free paths and introduce the following probability | |||

<math display="block"> | |||

P[A,x,t] = \int_{x(0)=0}^{x(t)=x} \mathcal{D}x(\tau)\, | |||

</math | \exp\!\left[-\frac{1}{T}\int_0^t d\tau\,\frac{1}{2}(\partial_\tau x)^2\right]\, | ||

\delta\!\left(\int_0^t d\tau\,V(x(\tau),\tau) - A\right). | |||

</math> | |||

* Second, the moment generating function | |||

<math display="block"> | |||

Z_p(x,t) = \int_{-\infty}^{\infty} dA\,e^{-pA}P[A,x,t] | |||

= \int_{x(0)=0}^{x(t)=x} \mathcal{D}x(\tau)\, | |||

\exp\!\left[-\frac{1}{T}\int_0^t d\tau\,\frac{1}{2}(\partial_\tau x)^2 | |||

- p\int_0^t d\tau\,V(x(\tau),\tau)\right]. | |||

</math> | |||

* Third, consider free paths evolving up to <math>t+dt</math> and reaching <math>x</math>: | |||

<math display="block"> | |||

Z_p(x,t+dt) | |||

</math></ | = \left\langle e^{-p\int_0^{t+dt} d\tau\,V(x(\tau),\tau)} \right\rangle | ||

= \left\langle e^{-p\int_0^{t} d\tau\,V(x(\tau),\tau)} \right\rangle e^{-pV(x,t)dt} | |||

= [1-pV(x,t)dt+\dots]\left\langle Z_p(x-\Delta x,t)\right\rangle_{\Delta x}. | |||

</math> | |||

Here <math>\langle\cdots\rangle</math> is the average over all free paths, while <math>\langle\cdots\rangle_{\Delta x}</math> is the average over the last jump, namely <math>\langle\Delta x\rangle=0</math> and <math>\langle\Delta x^2\rangle = T\,dt</math>. | |||

* At the lowest order we have | * At the lowest order we have | ||

<math display="block"> | |||

Z_p(x,t+dt)= Z_p(x,t) +dt \left[ \frac{T}{2} \partial_x^2 Z_p - | Z_p(x,t+dt) | ||

</math> | = Z_p(x,t) + dt\left[\frac{T}{2}\partial_x^2 Z_p - pV(x,t)Z_p\right] + O(dt^2). | ||

Replacing <math> p=1/T</math> we obtain the partition function is the solution of the | </math> | ||

\partial_t Z(x,t) =- | Replacing <math>p=1/T</math> we obtain that the partition function is the solution of the Schrödinger-like equation: | ||

<math display="block"> | |||

\partial_t Z(x,t) | |||

= -\hat H Z | |||

= -\left[-\frac{T}{2}\frac{d^2}{dx^2} + \frac{V(x,t)}{T}\right] Z(x,t), | |||

\qquad | |||

Z(x,t=0)=\delta(x). | |||

</math> | |||

=== Remarks === | |||

<Strong>Remark 1:</Strong> | |||

This equation is a diffusive equation with multiplicative noise <math>V(x,t)/T</math>. Edwards–Wilkinson is instead a diffusive equation with additive noise. | |||

<Strong>Remark 2:</Strong> | |||

This Hamiltonian is time dependent because of the multiplicative noise <math>V(x,t)/T</math>. For a <Strong>time independent</Strong> Hamiltonian, we can use the spectrum of the operator. In general we will have two parts: | |||

* A discrete set of eigenvalues <math>E_n</math> with eigenstates <math>\psi_n(x)</math> | |||

* A continuum part where the states <math>\psi_E(x)</math> have energy <math>E</math>. We define the density of states <math>\rho(E)</math>, such that the number of states with energy in <math>(E,E+dE)</math> is <math>\rho(E)\,dE</math>. | |||

In this case <math>Z(x,t)</math> can be written as the sum of two contributions: | |||

<math display="block"> | |||

Z(x,t) | |||

= \left(e^{-\hat H t}\right)_{0\to x} | |||

= \sum_n \psi_n(0)\psi_n^*(x)e^{-E_n t} | |||

+ \int_0^\infty dE\,\rho(E)\,\psi_E(0)\psi_E^*(x)e^{-Et}. | |||

</math> | </math> | ||

<math> | |||

In absence of disorder, one can find the propagator of the free particle, that, in the original variables, writes: | |||

<math display="block"> | |||

Z_{\text{free}}(x,t)=\frac{e^{-x^2/(2Tt)}}{\sqrt{2\pi Tt}}. | |||

</math> | </math> | ||

==== Hints: free particle in 1D ==== | |||

For a free particle in one dimension the Hamiltonian is <math>\hat H = -\frac{T}{2}\,\partial_x^2</math>. | |||

'''Spectrum.''' | |||

The spectrum is purely continuous. The eigenstates are plane waves | |||

<math display="block"> | |||

\psi_k(x)=\frac{1}{\sqrt{2\pi}}e^{ikx}, | |||

\qquad | |||

E_k=\frac{T k^2}{2}, | |||

</math> | |||

with <math>k\in\mathbb{R}</math>. The states are delocalized and satisfy Dirac delta normalization | |||

<math display="block"> | |||

\int_{-\infty}^{\infty} dx\,\psi_{k'}^*(x)\psi_k(x)=\delta(k-k'). | |||

</math> | |||

=== | '''Energy representation and density of states.''' | ||

For a given energy <math>E>0</math> there are two degenerate states, | |||

<math display="block"> | |||

\psi_E^{\pm}(x)=\frac{1}{\sqrt{2\pi}}\,e^{\pm i\sqrt{2E/T}\,x}. | |||

</math> | |||

The density of states is obtained from | |||

<math display="block"> | |||

\rho(E)=\int_{-\infty}^{\infty} dk\,\delta(E-E_k), | |||

\qquad | |||

E_k=\frac{T k^2}{2}. | |||

</math> | |||

'''Propagator.''' | |||

Using the spectral decomposition one can write | |||

<math display="block"> | |||

Z(x,t) | |||

=\int_0^{\infty} dE\,\rho(E) | |||

\sum_{\sigma=\pm} | |||

\psi_E^{\sigma}(0)\psi_E^{\sigma *}(x)\,e^{-Et}. | |||

</math> | |||

Evaluating the resulting Gaussian integral yields | |||

<math display="block"> | |||

Z_{\text{free}}(x,t)=\frac{e^{-x^2/(2Tt)}}{\sqrt{2\pi Tt}}. | |||

</math> | |||

Useful identity: | |||

<math display="block"> | |||

\int_{-\infty}^{\infty} dx\,e^{-(a x^2+b x)} | |||

=\sqrt{\frac{\pi}{a}}\,e^{\,b^2/(4a)},\qquad a>0. | |||

</math> | </math> | ||

==== | == Cole Hopf Transformation == | ||

Replacing | |||

Replacing | * <math>T = 2\nu</math> | ||

* <math>T =2 \nu </math> | * <math>x = r</math> | ||

* <math>x = r </math> | * <math>Z(x,t) = \exp\!\left(\frac{\lambda}{2\nu}h(r,t)\right)</math> | ||

* <math> | * <math>-V(x,t)=\lambda\,\eta(r,t)</math> | ||

* | |||

you get | |||

<math display="block"> | |||

\partial_t h(r,t)= \nu \nabla^2 h(r,t)+ \frac{\lambda}{2}(\nabla h)^2 + \eta(r,t). | |||

</math | </math> | ||

The KPZ equation! | The KPZ equation! | ||

We can establish a KPZ/Directed polymer dictionary, valid in any dimension. Let us remark that the free energy of the polymer is | We can establish a KPZ/Directed polymer dictionary, valid in any dimension. Let us remark that the free energy of the polymer is | ||

<math display="block"> | |||

F= - T \ln | F = -T\ln Z(x,t) = -\frac{1}{\lambda}h(r,t). | ||

</math | </math> | ||

At low temperature, the free energy approaches the ground state energy | At low temperature, the free energy approaches the ground state energy <math>E_{\min}</math>. | ||

{| class="wikitable" | {| class="wikitable" | ||

|+ | |+ KPZ / Directed Polymer dictionary | ||

|- | |- | ||

! KPZ | ! KPZ quantity !! KPZ scaling !! Directed polymer quantity !! Directed polymer scaling | ||

|- | |- | ||

| <math> r </math> | | <math>r</math> | ||

| <math>r \sim t^{1/z}</math> | |||

| <math>x</math> | |||

| <math>x \sim t^{\zeta}</math> | |||

|- | |- | ||

| <math>t</math> | | <math>t</math> | ||

| <math>h(r,t) \sim t^{\alpha/z}</math> | |||

| <math>t</math> | |||

| <math>\overline{(E_{\min}-\overline{E_{\min}})^2} \sim t^{2\theta}</math> | |||

|- | |- | ||

| <math>h</math> | | <math>h</math> | ||

| <math>h(r,t) \sim r^{\alpha}</math> | |||

| <math>F,\,E_{\min}</math> | |||

| <math>\overline{(E_{\min}-\overline{E_{\min}})^2} \sim t^{2\theta}</math> | |||

|} | |} | ||

We conclude that | We conclude that | ||

<math display="block"> | |||

\theta | \theta = \alpha/z, | ||

</math | \quad | ||

Moreover, the scaling relation <math> | \zeta = 1/z. | ||

\theta =2 \zeta- 1 | </math> | ||

</math> is a reincarnation of the Galilean invariance <math> | Moreover, the scaling relation <math>\theta = 2\zeta - 1</math> is a reincarnation of the Galilean invariance <math>\alpha + z = 2</math>. | ||

\alpha +z =2 | |||

</math>. | |||

Latest revision as of 22:02, 1 March 2026

Goal: This lecture is dedicated to a classical model in disordered systems: the directed polymer in random media. It has been introduced to model vortices in superconductors or domain walls in magnetic films. We will focus on algorithms that identify the ground state or compute the free energy at temperature , as well as on the Cole–Hopf transformation that maps this model to the KPZ equation.

Directed Polymers (d = 1)

The configuration is described by a vector function , where is the internal coordinate. The polymer lives in dimensions.

Examples: vortex lines, DNA strands, fronts.

Although polymers may form loops, we restrict to directed polymers, i.e., configurations without overhangs or backward turns.

Directed Polymers on a lattice

We introduce a lattice model for the directed polymer (see figure). In a companion notebook we provide the implementation of the powerful Dijkstra algorithm. Dijkstra allows one to identify the minimal energy among the exponential number of configurations :

We are also interested in the ground state configuration . For both quantities we expect scale invariance with two exponents , for the energy and for the roughness:

Universal exponents: Both and are independent of the lattice, the disorder distribution, the elastic constants, or the boundary conditions.

Non-universal constants: , , are of order 1 and depend on the lattice, the disorder distribution, the elastic constants, etc. However is independent of the boundary conditions.

Universal distributions: , are universal, but depend on the boundary conditions. Starting from 2000, a remarkable connection has been revealed between this model and the smallest eigenvalues of random matrices. In particular, we discuss two different boundary conditions:

- Droplet: . In this case, up to rescaling, is distributed as the smallest eigenvalue of a GUE random matrix (Tracy–Widom distribution ).

- Flat: while the other end is free. In this case, up to rescaling, is distributed as the smallest eigenvalue of a GOE random matrix (Tracy–Widom distribution ).

Entropy and scaling relation

It is useful to compute the entropy From which one could guess from dimensional analysis This relation is actually exact also for the continuum model.

Directed polymers in the continuum

We now reanalyze the previous problem in the presence of quenched disorder. Instead of discussing the case of interfaces, we will focus on directed polymers.

Let us consider polymers of length . The energy associated with a given polymer configuration can be written as The first term describes the elastic energy of the polymer, while the second one is the disordered potential, which we assume to be where is the disorder strength.

Polymer partition function and propagator of a quantum particle

Let us consider polymers starting at , ending at and at thermal equilibrium at temperature . The partition function of the model reads Here, the partition function is written as a sum over all possible paths, corresponding to all polymer configurations that start at and end at , weighted by the appropriate Boltzmann factor.

Let's perform the following change of variables: . We also identify with and as the time.

Note that is the classical action of a particle with kinetic energy and time-dependent potential , evolving from time zero to time . From the Feynman path integral formulation, is the propagator of the quantum particle.

Feynman–Kac formula

Let's derive the Feynman–Kac formula for in the general case:

- First, focus on free paths and introduce the following probability

- Second, the moment generating function

- Third, consider free paths evolving up to and reaching :

Here is the average over all free paths, while is the average over the last jump, namely and .

- At the lowest order we have

Replacing we obtain that the partition function is the solution of the Schrödinger-like equation:

Remarks

Remark 1:

This equation is a diffusive equation with multiplicative noise . Edwards–Wilkinson is instead a diffusive equation with additive noise.

Remark 2:

This Hamiltonian is time dependent because of the multiplicative noise . For a time independent Hamiltonian, we can use the spectrum of the operator. In general we will have two parts:

- A discrete set of eigenvalues with eigenstates

- A continuum part where the states have energy . We define the density of states , such that the number of states with energy in is .

In this case can be written as the sum of two contributions:

In absence of disorder, one can find the propagator of the free particle, that, in the original variables, writes:

Hints: free particle in 1D

For a free particle in one dimension the Hamiltonian is .

Spectrum. The spectrum is purely continuous. The eigenstates are plane waves with . The states are delocalized and satisfy Dirac delta normalization

Energy representation and density of states. For a given energy there are two degenerate states, The density of states is obtained from

Propagator. Using the spectral decomposition one can write Evaluating the resulting Gaussian integral yields

Useful identity:

Cole Hopf Transformation

Replacing

you get The KPZ equation!

We can establish a KPZ/Directed polymer dictionary, valid in any dimension. Let us remark that the free energy of the polymer is At low temperature, the free energy approaches the ground state energy .

| KPZ quantity | KPZ scaling | Directed polymer quantity | Directed polymer scaling |

|---|---|---|---|

We conclude that Moreover, the scaling relation is a reincarnation of the Galilean invariance .