T-6: Difference between revisions

| Line 86: | Line 86: | ||

<li> '''Quenches.''' We can generalize this protocol to higher <math>T</math>: we extract randomly the initial condition of the dynamics, and then we evolve the configuration | <li> '''Quenches.''' We can generalize this protocol to higher <math>T</math>: we extract randomly the initial condition of the dynamics, and then we evolve the configuration with Langevin dynamics (gradient descent + noise): | ||

<math display="block"> | |||

\frac{d \vec{\sigma}(t)}{dt}=- \nabla_\perp E(\vec{\sigma})+ \vec{\eta}_\perp(t), \quad \quad \langle \eta_i(t) \eta_j(t')\rangle= 2 T \delta_{ij} \delta(t-t') | |||

</math> | |||

In Langevin dynamics, <math>\vec{\eta}_\perp(t)</math> a Gaussian vector at each time <math> t </math>, uncorrelated from the vectors at other times <math> t' \neq t </math>, with zero average and some constant variance proportional to temperature. They represent effectively the action of a bath on the system. | |||

Revision as of 16:58, 15 March 2026

Goal:

Complete the characterisation of the energy landscape of the spherical -spin.

Techniques: saddle point, random matrix theory.

Problems

Problem 6: the Hessian at the stationary points, and random matrix theory

This is a continuation of problem 5. To get the complexity of the spherical -spin, it remains to compute the expectation value of the determinant of the Hessian matrix: this is the goal of this problem. We will do this exploiting results from random matrix theory discussion in the Tutorial and Exercise 4 .

- Gaussian Random matrices. Show that the matrix , defined in Problem 5, is a GOE matrix, i.e. a matrix taken from the Gaussian Orthogonal Ensemble, meaning that it is a symmetric matrix with distribution where is a normalization. What is the value of ?

- Eigenvalue density and concentration. Let be the eigenvalues of the matrix . Show that the following identity holds: where is the empirical eigenvalue distribution. It can be shown that if is a GOE matrix, the distribution of the empirical distribution has a large deviation form with speed , meaning that where now is a functional. Using a saddle point argument, show that this implies where is the typical value of the eigenvalue density, which satisfies .

- The semicircle and the complexity. The eigenvalue density of GOE matrices is self-averaging, and it equals to

-

Combining all the results, show that the annealed complexity is

The integral can be computed explicitly, and one finds:

Plot the annealed complexity, and determine numerically where it vanishes: why is this a lower bound or the ground state energy density?

- The threshold and the stability. Sketch for different values of ; recalling that the Hessian encodes for the stability of the stationary points, show that there is a transition in the stability of the stationary points at the critical value of the energy density When are the critical points stable local minima? When are they saddles? Why the stationary points at are called marginally stable ?

Back to dynamics: quenches, and dynamical transitions

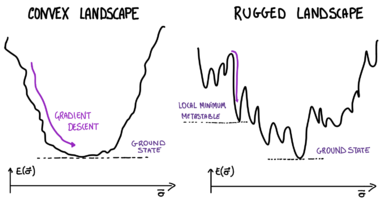

Through Problems 5 and 6, we have shown that the energy landscape of the spherical -spin model has exponentially many stationary points , and that there is a transition at the energy density : for the stationary points are saddles, for they are local minima. Let us try to deduce something on the systems's dynamics out of this.

- [*] - This quantity looks similar to the entropy we computed for the REM in Problem 1. However, while the entropy counts all configurations at a given energy density, the complexity accounts only for the stationary points.

Check out: key concepts

Metastable states, Hessian matrices, random matrix theory, landscape’s complexity.