T-I: Difference between revisions

| (62 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

<strong>Goal: </strong> understanding the energy landscape of the simplest spin-glass model, the Random Energy Model (REM). <br> | <strong>Goal: </strong> understanding the energy landscape of the simplest spin-glass model, the Random Energy Model (REM). <br> | ||

<strong> Techniques: </strong> probability theory | <strong> Techniques: </strong> probability theory. | ||

<br> | <br> | ||

<br> | <br> | ||

== | == Large-N disordered systems: a probabilistic dictionary == | ||

<br> | <br> | ||

<ul> | <ul> | ||

<li> We will discuss disordered systems with <math> N </math> degrees of freedom (for instance, for a spin system on a lattice of size <math>L</math> in dimension <math> d</math>, <math> N = L^d </math>). Since the systems are random, the quantities that describe their properties (the free energy, the number of configurations of the system that satisfy a certain property, the magnetization etc) are also random variables, with a distribution. In this discussion we denote these random variables generically with <math> X_N, Y_N </math> (where the subscript denotes the number of degrees of freedom) and with <math> P_{X_N}(x), P_{Y_N}(y) </math> their distribution. Statistical physics goal is to characterize the behavior of these quantities in the limit <math> N \to \infty</math>. | <li> <ins>'''Notation.'''</ins> We will discuss disordered systems with <math> N </math> degrees of freedom (for instance, for a spin system on a lattice of size <math>L</math> in dimension <math> d</math>, <math> N = L^d </math>). The disorder can have various sources: (e.g., the interactions between the degrees of freedom are random). Since the systems are random, the quantities that describe their properties (the free energy, the number of configurations of the system that satisfy a certain property, the magnetization etc) are also random variables, with a distribution. In this discussion we denote these random variables generically with <math> X_N, Y_N </math> (where the subscript denotes the number of degrees of freedom) and with <math> P_{X_N}(x), P_{Y_N}(y) </math> their distribution. We denote with <math> \mathbb{E}({\cdot})</math> the average over the disorder (equivalently, over the distribution <math> P_{X_N}(x) </math> of the quantity, induced by the disorder). Statistical physics goal is to characterize the behavior of these quantities in the limit <math> N \to \infty</math>. In statistical physics, in general one deals with exponentially scaling quantities: | ||

<math display="block"> | |||

X_N = e^{N Y_N} | |||

</math> | |||

</li> | |||

<br> | |||

<li> <ins>'''Typical and rare.'''</ins> The typical value of a random variable is the value at which its distribution peaks (it is the most probable value). Values at the tails of the distribution, where the probability density does not peak but it is small (for instance, vanishing with <math> N \to \infty </math>) are said to be rare. In general, average and typical value of a random variable may not coincide: this happens when the average is dominated by values of the random variable that lie in the tail of the distribution and are rare, associated to a small probability of occurrence. | |||

<br> | |||

<br> | |||

<code>Short exercise. </code> Consider a random variable <math> X </math> with a log-normal distribution, meaning that <math> X=e^Y </math> where <math> Y </math> is a random variable with a Gaussian distribution with average <math> \mu </math> and variance <math> \sigma </math>. Show that <math> X^{typ}=e^\mu </math> while <math> \mathbb{E}({X})=e^{\mu+\frac{\sigma^2}{2}} </math>, and thus the average value is larger than the typical one. | |||

</li> | </li> | ||

<br> | <br> | ||

<li> <ins>'''Self-averagingness.'''</ins> The physics of disordered systems is described by quantities that are distributed when <math> N </math> is finite: they take different values from sample to sample of the system, where a sample corresponds to one "realization" of the disorder | |||

<li> <ins>'''Self-averagingness.'''</ins> The physics of disordered systems is described by quantities that are distributed when <math> N </math> is finite: they take different values from sample to sample of the system, where a sample corresponds to one "realization" of the disorder. However, for relevant quantities sample to sample fluctuations are suppressed when <math> N \to \infty</math>. These quantities are said to be <ins>self-averaging </ins>. | |||

A random variable <math> Y_N </math> is self-averaging when, in the limit <math> N \to \infty </math>, its distribution concentrates around the average, collapsing to a deterministic value: | A random variable <math> Y_N </math> is self-averaging when, in the limit <math> N \to \infty </math>, its distribution concentrates around the average, collapsing to a deterministic value: | ||

<math display="block"> | <math display="block"> | ||

\lim_{N \to \infty} Y_N =\lim_{N \to \infty} | \lim_{N \to \infty} Y_N =\lim_{N \to \infty} \mathbb{E}({Y_N}):= {Y_\infty}, \quad \quad \mathbb{E}({Y_N})=\int \, dy\, P_{Y_N}(y)\, y | ||

</math> | </math> | ||

| Line 23: | Line 34: | ||

This happens when its fluctuations are small compared to the average, meaning that <sup>[[#Notes|[*] ]]</sup> | This happens when its fluctuations are small compared to the average, meaning that <sup>[[#Notes|[*] ]]</sup> | ||

<math display="block"> | <math display="block"> | ||

\lim_{N \to \infty} \frac{\ | \lim_{N \to \infty} \frac{\mathbb{E}(Y_N^2)}{\mathbb{E}(Y_N)^2}=1. | ||

</math> | </math> | ||

For self-averaging quantities, in the limit <math> N \to \infty </math> the distribution collapses to a single value <math> {Y_\infty}</math>, that is both the limit of the average and typical value of the quantity: <ins><em> sample-to-sample </em> fluctuations are suppressed</ins> when <math>N </math> is large. When the random variable is not self-averaging, it remains distributed in the limit <math> N \to \infty </math>. | |||

</li> | |||

<br> | <br> | ||

<code>Example 1. </code> Consider the partition function of a disordered system at inverse temperature <math> \beta</math>, <math> Z_N(\beta) </math>. When <math> N </math> is large this random variable has an exponential scaling, <math> Z_N(\beta) \sim e^{-\beta N f_N(\beta)} </math>, where the variable <math> f_N(\beta) </math> is the free energy density. This scaling means that the random variable <math> \beta f_N=-N^{-1}\log Z_N </math> remains of <math> O(1) </math> when <math> N \to \infty </math>, and we can define its distribution in this limit. In all the disordered systems models we will consider in these lectures, the free-energy not only has a well defined distribution in the limit, but it is also self-averaging: it does not fluctuate from sample to sample when <math> N </math> is large, and similarly for all the thermodynamics observables, that can be obtained taking derivatives of the free energy. The physics of the system does not depend on the particular sample. | |||

<br> | |||

<br> | <br> | ||

<code>Example 2. </code> While intensive quantities like <math> f_N </math> are self-averaging, quantities scaling exponentially like the partition function <math> Z_N </math> are not necessarily so: in particular, we will see that they are not when the system is in a glassy phase. Another example is given in Problem 1 below, where <math> X_N \to \mathcal{N}_N(E) </math> and <math> Y_N \to S(E) </math>. | |||

<br> | <br> | ||

<br> | <br> | ||

Consider | <li> <ins>'''Quenched averages.'''</ins> Consider exponentially scaling quantities like <math> X_N = e^{N Y_N} </math>, that occur very naturally in statistical physics. How to get <math> Y^{\text{typ}} </math> from <math> X_N </math>? On one hand, if we know how to compute <math> X_N^{\text{typ}} </math>, then: | ||

<math display="block"> | <math display="block"> | ||

Y^{\text{typ}} = \lim_{N \to \infty} \frac{{\log X_N^{\text{typ}}}}{N}. | |||

</math> | </math> | ||

If <math> Y_N </math> is self-averaging, we can also use | |||

<math display="block"> | <math display="block"> | ||

Y^{\text{typ}} =\lim_{N \to \infty} \mathbb{E}(Y_N)= \lim_{N \to \infty} \frac{\mathbb{E}(\log X_N)}{N}. | |||

</math> | </math> | ||

In the language of disordered systems, computing the typical value of <math> X_N </math> through the average of its logarithm corresponds to performing a <ins> quenched average</ins>: from this average, one extracts the correct asymptotic value of the self-averaging quantity <math> Y_N </math>. | |||

In the language of disordered systems, computing the typical value of <math> X_N </math> through the average of its logarithm corresponds to performing a <ins> quenched average</ins>: from this average, one extracts the correct asymptotic value of the self-averaging quantity <math> Y_N </math>.</li><br> | </li><br> | ||

<li> <ins>'''Annealed averages.'''</ins> The quenched average does not necessarily coincide with the <ins> annealed average</ins>, defined as: | <li> <ins>'''Annealed averages.'''</ins> The quenched average does not necessarily coincide with the <ins> annealed average</ins>, defined as: | ||

<math display="block"> | <math display="block"> | ||

Y_{\text{a}} = \lim_{N \to \infty} \frac{\log \mathbb{E}(X_N)}{N}. | |||

</math> | </math> | ||

In fact, it always holds <math> \ | In fact, it always holds <math> \mathbb{E}(\log X_N) \leq \log \mathbb{E}(X_N)</math> because of the concavity of the logarithm. | ||

When the inequality is strict and quenched and annealed averages are not the same, it means that <math> X_N </math> is not self-averaging, and its average value is exponentially larger than the typical value (because the average is dominated by rare events). In this case, to get the correct limit of the self-averaging quantity <math> Y_N </math> one has to perform the quenched average.<sup>[[#Notes|[**] ]]</sup> This is what happens in glassy phases. | When the inequality is strict and quenched and annealed averages are not the same, it means that <math> X_N </math> is not self-averaging, and its average value is exponentially larger than the typical value (because the average is dominated by rare events). In this case, to get the correct limit of the self-averaging quantity <math> Y_N </math> one has to perform the quenched average.<sup>[[#Notes|[**] ]]</sup> This is what happens in many cases in disordered systems, for example in glassy phases. Sometimes, computing quenched averages requires ad hoc techniques (e.g., replica tricks). | ||

</li> | </li> | ||

</ul> | </ul> | ||

<div style="font-size:89%"> | <div style="font-size:89%"> | ||

| Line 90: | Line 81: | ||

: <small>[**]</small> - Notice that the opposite is not true: one can have situations in which the partition function is not self-averaging, but still the quenched free energy coincides with the annealed one. | : <small>[**]</small> - Notice that the opposite is not true: one can have situations in which the partition function is not self-averaging, but still the quenched free energy coincides with the annealed one. | ||

</div> | </div> | ||

== Problems == | == Problems == | ||

| Line 100: | Line 87: | ||

=== Problem 1: the energy landscape of the REM === | === Problem 1: the energy landscape of the REM === | ||

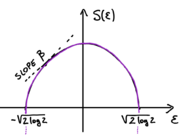

In this problem we study the random variable <math> \mathcal{N}_N(E)dE </math>, that is the number of configurations having energy <math> E_\alpha \in [E, E+dE] </math>. For large <math> N </math> this variable scales exponentially <math>\mathcal{N}_N(E)dE \sim e^{N S_N\left( E/N\right)}dE</math>. Let <math> \epsilon=E/N </math>. The goal of this Problem is to show that the asymptotic value of the entropy <math> S_N(\epsilon) </math>, that is self-averaging, is given by (henceforth, <math> S(\epsilon):=S_\infty(\epsilon) </math>): | |||

[[File:Entropy REM.png|thumb|left|x140px|Entropy of the Random Energy Model]] | [[File:Entropy REM.png|thumb|left|x140px|Entropy of the Random Energy Model]] | ||

<math display="block"> | |||

S_\infty(\epsilon):=\lim_{N \to \infty} S_N(\epsilon)=\begin{cases} | |||

\lim_{N \to \infty} S_N | |||

\log 2- \frac{\epsilon^2}{2} \quad &\text{ if } |\epsilon| \leq \sqrt{2 \, \log 2} \\ | \log 2- \frac{\epsilon^2}{2} \quad &\text{ if } |\epsilon| \leq \sqrt{2 \, \log 2} \\ | ||

- \infty \quad &\text{ if } |\epsilon| >\sqrt{2 \, \log 2} | - \infty \quad &\text{ if } |\epsilon| >\sqrt{2 \, \log 2} | ||

\end{cases} | \end{cases} | ||

</math | </math> | ||

<br> | |||

<ol> | <ol> | ||

<li> <em> Averages: the annealed entropy.</em> We begin by computing the annealed entropy <math> S_{\text{a}} </math>, which is defined by the average <math> \ | <li> <em> Averages: the annealed entropy.</em> We begin by computing the annealed entropy <math> S_{\text{a}} </math>, which is defined by the average <math> \mathbb{E}({\mathcal{N}_N(E)} dE)= \text{exp}\left(N S_{\text{a}}\left( E/N \right)+ o(N)\right)dE </math>. Compute this function using the representation <math> \mathcal{N}_N(E)dE= \sum_{\alpha=1}^{2^N} \chi_\alpha(E) dE \;</math> [with <math> \chi_\alpha(E)=1</math> if <math> E_\alpha \in [E, E+dE]</math> and <math> \chi_\alpha(E)=0</math> otherwise]. </li> | ||

</ol> | </ol> | ||

<br> | <br> | ||

<ol start="2"> | <ol start="2"> | ||

<li><em> Self-averaging.</em> For <math> |\epsilon| \leq \sqrt{2 \, \log 2} </math> the quantity <math> \mathcal{N}_N </math> is self-averaging: its distribution concentrates around the average value <math> \ | <li><em> Self-averaging.</em> For <math> |\epsilon| \leq \sqrt{2 \, \log 2} </math> the quantity <math> \mathcal{N}_N </math> is self-averaging: its distribution concentrates around the average value <math> \mathbb{E}(\mathcal{N}_N) </math> when <math> N \to \infty </math>. Show this by computing the second moment <math>\mathbb{E}(\mathcal{N}^2_N)</math>. Deduce that <math> S(\epsilon)= S_a(\epsilon)</math> when <math> |\epsilon| \leq \sqrt{2 \, \log 2} </math>. This property of being self-averaging is no longer true in the region where the annealed entropy is negative: why does one expect fluctuations to be relevant in this region?</li> | ||

</ol> | </ol> | ||

<br> | <br> | ||

<ol start="3"> | <ol start="3"> | ||

<li> <em> Rare events.</em> For <math> |\epsilon| > \sqrt{2 \, \log 2} </math> the annealed entropy is negative: the average number of configurations with those energy densities is exponentially small in <math> N </math>. This implies that the probability to get configurations with those energy is exponentially small in <math> N </math>: these configurations are rare. Do you have an idea of how to show this, using the expression for <math> \ | <li> <em> Rare events.</em> For <math> |\epsilon| > \sqrt{2 \, \log 2} </math> the annealed entropy is negative: the average number of configurations with those energy densities is exponentially small in <math> N </math>. This implies that the probability to get configurations with those energy is exponentially small in <math> N </math>: these configurations are rare. Do you have an idea of how to show this, using the expression for <math> \mathbb{E}(\mathcal{N}_N)?</math> What is the typical value of <math> \mathcal{N}_N </math> in this region? Putting everything together, derive the form of the typical value of the entropy density. </li> | ||

</ol> | </ol> | ||

<br> | |||

<ol start="3"> | |||

<li> <em> The energy landscape.</em> When <math>N </math> is large, if one extract one configuration at random (with a uniform measure over all configurations), what will be its energy? What is the equilibrium energy density of the system at infinite temperature? What is the typical value of the ground state energy? Deduce this from the entropy calculation: is this consistent with Alberto's lecture? </li> | |||

</ol> | |||

<!--'''Comment:''' this analysis of the landscape suggests that in the large <math> N </math> limit, the fluctuations due to the randomness become relevant when one looks at the bottom of their energy landscape, close to the ground state energy. We show below that this intuition is correct, and corresponds to the fact that the partition function <math> Z </math> has an interesting behaviour at low temperature.--> | <!--'''Comment:''' this analysis of the landscape suggests that in the large <math> N </math> limit, the fluctuations due to the randomness become relevant when one looks at the bottom of their energy landscape, close to the ground state energy. We show below that this intuition is correct, and corresponds to the fact that the partition function <math> Z </math> has an interesting behaviour at low temperature.--> | ||

<!--<ul>'''Example: typical vs average.''' Often, quantities like <math> Y_N </math> have a distribution that for large <math> N </math> takes the form <math> P_{Y_N}(y) \sim e^{-N^\alpha g(y)+ \text{subleading}} </math> where <math> g(y) </math> is some positive function and <math> \alpha>0 </math>. This is called a <ins> large deviation form </ins> for the probability distribution, with <ins> speed </ins> <math> N^\alpha </math>. This distribution is of <math> O(1) </math> for the value <math> y^{\text{typ}} </math> such that <math> g(y^{\text{typ}})=0 =g'(y^{\text{typ}})</math>: this value is the <ins> typical value </ins> of <math> Y_N </math> (asymptotically at large <math> N </math>); all the other values of <math> y </math> are associated to a probability that is exponentially small in <math> N^\alpha</math>: they are <ins>exponentially rare</ins>. | |||

Consider now an exponentially scaling quantity like <math> X_N = e^{N Y_N} </math>, and let’s fix <math> \alpha=1 </math>. The asymptotic typical values <math> x^{\text{typ}} </math> and <math> y^{\text{typ}} </math> are related by: | |||

<math display="block"> | |||

y^{\text{typ}} =\lim_{N \to \infty} \frac{\log x^{\text{typ}} }{N}, | |||

</math> | |||

so the scaling of <math> x^{\text{typ}} </math> is <math> x^{\text{typ}}\sim e^{N y^{\text{typ}}} </math>. Let us now look at the scaling of the average. | |||

The average of <math> X_N </math> can be computed with the <ins> saddle point approximation </ins> for large <math> N </math>: | |||

<math display="block"> | |||

\overline{X_N} =\int dy\, P_{Y_N}(y)\, e^{N y}= \int dy\, e^{N[y- g(y)]+o(N)} =e^{N [y^*-g(y*)]+ o(N) }, | |||

</math> | |||

where <math> y^* </math> is the point maximising the shifted function <math> \tilde{g}(y)= y-g(y)</math>. In this example, <math> y^* \neq y^{\text{ty}} </math>: the asymptotic of the average value of <math> X_N </math> is different from the asymptotic of the typical value. In particular, the average is dominated by rare events, i.e. realisations in which <math> Y_N </math> takes the value <math> y^*</math>, whose probability of occurrence is exponentially small. | |||

</ul>--> | |||

== Check out: key concepts == | == Check out: key concepts == | ||

Self-averaging, average value vs typical value | Self-averaging, average value vs typical value, rare events, quenched vs annealed. | ||

== To know more == | == To know more == | ||

| Line 140: | Line 146: | ||

* A note on terminology: | * A note on terminology: | ||

<div style="font-size:89%"> | <div style="font-size:89%"> | ||

The terms “quenched” and “annealed” come from metallurgy and refer to the procedure in which you cool a very hot piece of metal: a system is quenched if it is cooled very rapidly (istantaneously changing its environment by putting it into cold water, for instance) and has to adjusts to this new fixed environment; annealed if it is cooled slowly, kept in (quasi)equilibrium with its changing environment at all times. Think now at how you compute the free energy of a disordered system, and at disorder as the environment. In the quenched protocol, you first compute the average over the configurations of the system (with the Boltzmann weight) keeping the disorder (environment) fixed, so the configurations have to adjust to the given disorder. Then you take the log and only afterwards average over the randomness (not even needed, at large <math>N</math>, if the free-energy is self-averaging). In the annealed protocol instead, the disorder (environment) and the configurations are treated on the same footing and adjust to each others, you average over both simultaneously. The quenched case corresponds to | The terms “quenched” and “annealed” come from metallurgy and refer to the procedure in which you cool a very hot piece of metal: a system is quenched if it is cooled very rapidly (istantaneously changing its environment by putting it into cold water, for instance) and has to adjusts to this new fixed environment; annealed if it is cooled slowly, kept in (quasi)equilibrium with its changing environment at all times. Think now at how you compute the free energy of a disordered system, and at disorder as the environment. In the quenched protocol, you first compute the average over the configurations of the system (with the Boltzmann weight) keeping the disorder (environment) fixed, so the configurations have to adjust to the given disorder. Like the hot metal has to adjust itself to the new, fixed, cold water). Then you take the log and only afterwards average over the randomness, that plays the role of the environment (not even needed, at large <math>N</math>, if the free-energy is self-averaging). In the annealed protocol instead, the disorder (environment) and the configurations are treated on the same footing and adjust to each others, you average over both simultaneously. The quenched case corresponds to equilibrating at fixed disorder, and it is justified when there is a separation of timescales and the disorder evolved much slower than the variables (the cold water changes temperature much slower than the metal you put in it); the annealed one to considering the disorder as a dynamical variable, that co-evolves with the variables. | ||

</div> | </div> | ||

Latest revision as of 11:53, 11 March 2026

Goal: understanding the energy landscape of the simplest spin-glass model, the Random Energy Model (REM).

Techniques: probability theory.

Large-N disordered systems: a probabilistic dictionary

- Notation. We will discuss disordered systems with degrees of freedom (for instance, for a spin system on a lattice of size in dimension , ). The disorder can have various sources: (e.g., the interactions between the degrees of freedom are random). Since the systems are random, the quantities that describe their properties (the free energy, the number of configurations of the system that satisfy a certain property, the magnetization etc) are also random variables, with a distribution. In this discussion we denote these random variables generically with (where the subscript denotes the number of degrees of freedom) and with their distribution. We denote with the average over the disorder (equivalently, over the distribution of the quantity, induced by the disorder). Statistical physics goal is to characterize the behavior of these quantities in the limit . In statistical physics, in general one deals with exponentially scaling quantities:

- Typical and rare. The typical value of a random variable is the value at which its distribution peaks (it is the most probable value). Values at the tails of the distribution, where the probability density does not peak but it is small (for instance, vanishing with ) are said to be rare. In general, average and typical value of a random variable may not coincide: this happens when the average is dominated by values of the random variable that lie in the tail of the distribution and are rare, associated to a small probability of occurrence.

Short exercise.Consider a random variable with a log-normal distribution, meaning that where is a random variable with a Gaussian distribution with average and variance . Show that while , and thus the average value is larger than the typical one. - Self-averagingness. The physics of disordered systems is described by quantities that are distributed when is finite: they take different values from sample to sample of the system, where a sample corresponds to one "realization" of the disorder. However, for relevant quantities sample to sample fluctuations are suppressed when . These quantities are said to be self-averaging . A random variable is self-averaging when, in the limit , its distribution concentrates around the average, collapsing to a deterministic value: This happens when its fluctuations are small compared to the average, meaning that [*] For self-averaging quantities, in the limit the distribution collapses to a single value , that is both the limit of the average and typical value of the quantity: sample-to-sample fluctuations are suppressed when is large. When the random variable is not self-averaging, it remains distributed in the limit .

- Quenched averages. Consider exponentially scaling quantities like , that occur very naturally in statistical physics. How to get from ? On one hand, if we know how to compute , then: If is self-averaging, we can also use In the language of disordered systems, computing the typical value of through the average of its logarithm corresponds to performing a quenched average: from this average, one extracts the correct asymptotic value of the self-averaging quantity .

- Annealed averages. The quenched average does not necessarily coincide with the annealed average, defined as: In fact, it always holds because of the concavity of the logarithm. When the inequality is strict and quenched and annealed averages are not the same, it means that is not self-averaging, and its average value is exponentially larger than the typical value (because the average is dominated by rare events). In this case, to get the correct limit of the self-averaging quantity one has to perform the quenched average.[**] This is what happens in many cases in disordered systems, for example in glassy phases. Sometimes, computing quenched averages requires ad hoc techniques (e.g., replica tricks).

Example 1. Consider the partition function of a disordered system at inverse temperature , . When is large this random variable has an exponential scaling, , where the variable is the free energy density. This scaling means that the random variable remains of when , and we can define its distribution in this limit. In all the disordered systems models we will consider in these lectures, the free-energy not only has a well defined distribution in the limit, but it is also self-averaging: it does not fluctuate from sample to sample when is large, and similarly for all the thermodynamics observables, that can be obtained taking derivatives of the free energy. The physics of the system does not depend on the particular sample.

Example 2. While intensive quantities like are self-averaging, quantities scaling exponentially like the partition function are not necessarily so: in particular, we will see that they are not when the system is in a glassy phase. Another example is given in Problem 1 below, where and .

- [*] - See here for a note on the equivalence of these two criteria.

- [**] - Notice that the opposite is not true: one can have situations in which the partition function is not self-averaging, but still the quenched free energy coincides with the annealed one.

Problems

This problem and the one of next week deal with the Random Energy Model (REM). The REM has been introduced in [1] . In the REM the system can take configurations with . To each configuration is assigned a random energy . The random energies are independent, taken from a Gaussian distribution

Problem 1: the energy landscape of the REM

In this problem we study the random variable , that is the number of configurations having energy . For large this variable scales exponentially . Let . The goal of this Problem is to show that the asymptotic value of the entropy , that is self-averaging, is given by (henceforth, ):

- Averages: the annealed entropy. We begin by computing the annealed entropy , which is defined by the average . Compute this function using the representation [with if and otherwise].

- Self-averaging. For the quantity is self-averaging: its distribution concentrates around the average value when . Show this by computing the second moment . Deduce that when . This property of being self-averaging is no longer true in the region where the annealed entropy is negative: why does one expect fluctuations to be relevant in this region?

- Rare events. For the annealed entropy is negative: the average number of configurations with those energy densities is exponentially small in . This implies that the probability to get configurations with those energy is exponentially small in : these configurations are rare. Do you have an idea of how to show this, using the expression for What is the typical value of in this region? Putting everything together, derive the form of the typical value of the entropy density.

- The energy landscape. When is large, if one extract one configuration at random (with a uniform measure over all configurations), what will be its energy? What is the equilibrium energy density of the system at infinite temperature? What is the typical value of the ground state energy? Deduce this from the entropy calculation: is this consistent with Alberto's lecture?

Check out: key concepts

Self-averaging, average value vs typical value, rare events, quenched vs annealed.

To know more

- Derrida. Random-energy model: limit of a family of disordered models [1]

- A note on terminology:

The terms “quenched” and “annealed” come from metallurgy and refer to the procedure in which you cool a very hot piece of metal: a system is quenched if it is cooled very rapidly (istantaneously changing its environment by putting it into cold water, for instance) and has to adjusts to this new fixed environment; annealed if it is cooled slowly, kept in (quasi)equilibrium with its changing environment at all times. Think now at how you compute the free energy of a disordered system, and at disorder as the environment. In the quenched protocol, you first compute the average over the configurations of the system (with the Boltzmann weight) keeping the disorder (environment) fixed, so the configurations have to adjust to the given disorder. Like the hot metal has to adjust itself to the new, fixed, cold water). Then you take the log and only afterwards average over the randomness, that plays the role of the environment (not even needed, at large , if the free-energy is self-averaging). In the annealed protocol instead, the disorder (environment) and the configurations are treated on the same footing and adjust to each others, you average over both simultaneously. The quenched case corresponds to equilibrating at fixed disorder, and it is justified when there is a separation of timescales and the disorder evolved much slower than the variables (the cold water changes temperature much slower than the metal you put in it); the annealed one to considering the disorder as a dynamical variable, that co-evolves with the variables.